When incidents like the recent Resolv exploit occur, the instinct is to search for the point of failure. A compromised key, a missed vulnerability, a breakdown in process. Something must have gone wrong. But in many cases, nothing actually breaks. The system behaves exactly as it was designed to. Resolv had undergone all the classic security measures—as many as 18 audits—and yet, in the simplest telling, an attacker got a key, used it to print money, and sold the fake money before anyone noticed.

When Systems Work as Designed

The Resolv incident is a good example of this. Funds moved, positions were affected, and value was extracted. From the outside, it looks like an attack. From the inside, it looks like the system executing a valid path. The more important question isn't how access was obtained—it's what that access allowed.

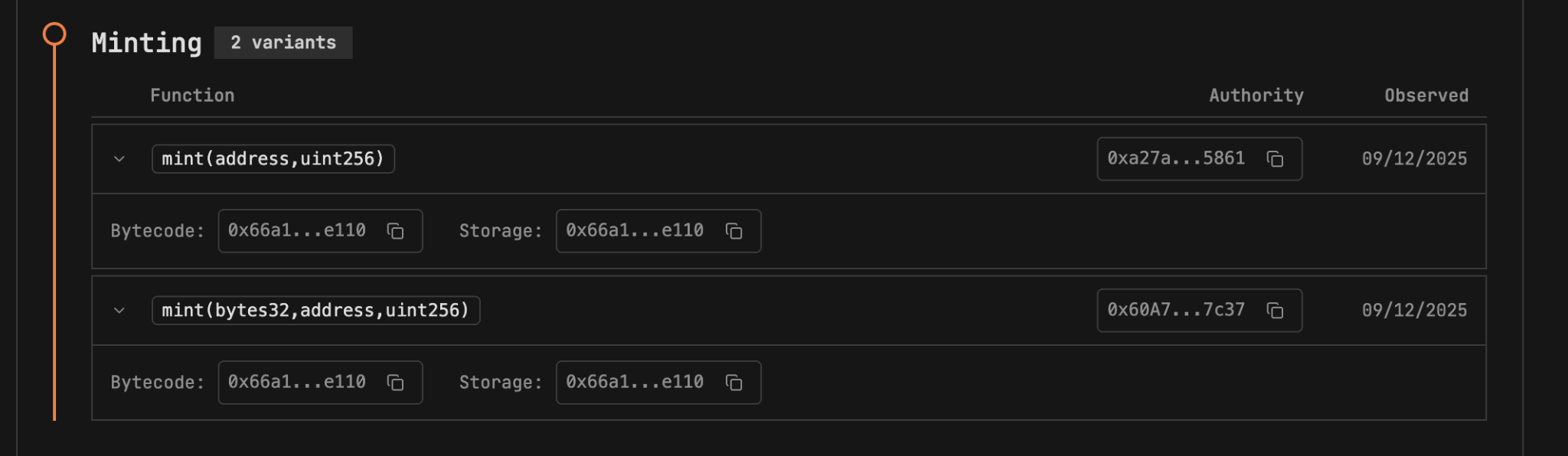

The Critical Capability: Minting Authority

In this case, the critical capability wasn't obscure. It was foundational: the ability to mint tokens.

That single permission changes the entire risk profile of a system. A contract that can mint is not just tracking value—it can create it. And whoever controls that permission effectively controls supply, pricing dynamics, and downstream liquidity. If that authority is concentrated or insufficiently constrained, the system carries an embedded form of risk that doesn't require a bug to materialize. It only requires use.

Seen through that lens, the exploit wasn't the creation of a new capability. It was the activation of an existing one. The mint function didn't behave incorrectly. It behaved exactly as permitted—just in a way that the system could not absorb.

Beyond Post-Mortems: Evaluating Pre-Existing Capabilities

This is where most post-mortems fall short. They do an excellent job tracing transactions and explaining how funds moved after the fact. But they often miss the more important question: what capabilities existed before anything happened?

In a deeper sense, the Resolv hack is a story about how DeFi protocols inherit the security assumptions—and the vulnerabilities—of the off-chain infrastructure they depend on. The on-chain smart contract worked perfectly. The broader system design and off-chain infrastructure of the compromised key apparently did not. If you evaluate the Resolv USR token from that perspective, the signal is straightforward.

The contract had minting authority. That authority was accessible through privileged control. And that combination—supply creation tied to a small set of actors—is one of the clearest indicators of systemic risk in a token. These are not hidden properties. They are visible, definable behaviors—and more importantly, they are interpretable.

A system with mint authority isn't inherently malicious. But it does introduce a specific question that needs to be answered clearly: Under what conditions can supply be created, and who decides? If the answer is "a small set of keys," then the system's risk is no longer hypothetical.

Behavioral Exposure vs Traditional Vulnerabilities

At TestMachine, we think about this in terms of behavioral exposure. Not just whether a contract has vulnerabilities, but whether it has capabilities that can materially impact users if exercised.

Minting is one of the clearest examples. Alongside behaviors like confiscation or blacklist control, it represents a class of permissions that directly affect ownership and value. These are not edge cases. They are core mechanics. Which points to a broader shift in how smart contract risk should be evaluated.

For years, the industry has focused on vulnerabilities—cases where code behaves incorrectly. But many of the most significant losses don't come from broken logic. They come from valid logic used exactly as written.

The Permissions Problem

That's not a vulnerability problem. It's a permissions problem—a question of what the system allows to happen under legitimate conditions. Instead of asking whether a contract can be exploited, it may be more useful to ask: What happens if the most powerful function in this contract is used to its fullest extent?

In the case of Resolv, that function was minting. And once it was used, the outcome wasn't surprising. It was inevitable.

A New Framework for Risk Assessment

The lesson here isn't just about key management. It's about recognizing that in smart contracts, permissions define reality. If a system allows supply to be created at will, then that possibility must be treated as part of the system—not as an exception to it. Nothing unusual has to happen for things to go wrong. Everything just has to work as designed.

This is where AI-powered security becomes transformative. By analyzing permissions and simulating real-world behavior, AI can uncover risks that traditional audits miss. Real-time monitoring and automated response mechanisms are now a necessity, not a luxury, as exploits unfold in minutes, leaving no time for reactive measures once the damage is visible.

Final Takeaway: Permissions Define Reality

In the case of Resolv, the outcome wasn't surprising—it was inevitable. In smart contracts, permissions define what is possible, and what is possible eventually becomes reality. If a system allows supply to be created at will, that possibility must be treated as part of the system—not as an exception.